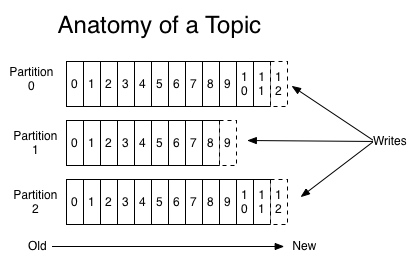

In kafka, A topic is a category/feed name for messages are stored and pushed. Further, Kafka breaks topic logs up into partitions, interesting part here.

Each partition is an ordered, immutable sequence of records that is continually appended to—a structured commit log. The records in the partitions are each assigned a sequential id number called the offset that uniquely identifies each record within the partition.

The partitions in the log serve several purposes. First, they allow the log to scale beyond a size that will fit on a single server. Each individual partition must fit on the servers that host it, but a topic may have many partitions so it can handle an arbitrary amount of data. Second they act as the unit of parallelism—more on that in a bit.

To significantly increase the throughput and performance of handling messages, the multiple partitions be consumed by multiple PODs(instances) should be considered into. Here's using simple kafka commands to simulate this case.

Alter the topic to use two partitions

kafka-topics --alter --zookeeper localhost:2181 --topic alarm --partitions 2

Count partitions in alarm Topic

kafka-topics --describe --zookeeper localhost:2181 --topic alarm

Count current message summary

kafka-run-class kafka.tools.GetOffsetShell --broker-list localhost:9092 --topic alarm

Sending the messages by different keys

kafka-console-producer --broker-list localhost:9092 --topic snmp-trap-alarm --property "parse.key=true" --property "key.separator=:"

>k1:message1

>k2:message2

>k3:message3

>...

Consumers can assign the specific partition num 0, 1... to handle messages

kafka-console-consumer --bootstrap-server localhost:9092 --from-beginning --topic alarm --property print.key=true --partition 0

kafka-console-consumer --bootstrap-server localhost:9092 --from-beginning --topic alarm --property print.key=true --partition 1

No comments:

Post a Comment